Notion

Notion is a flexible workspace tool for organizing personal and professional tasks, offering customizable notes, documents, databases, and more.

This Notion dlt verified source and

pipeline example

loads data using “Notion API” to the destination of your choice.

Sources that can be loaded using this verified source are:

| Name | Description |

|---|---|

| notion_databases | Retrieves data from Notion databases. |

Setup Guide

Grab credentials

- If you don't already have a Notion account, please create one.

- Access your Notion account and navigate to My Integrations.

- Click "New Integration" on the left and name it appropriately.

- Finally, click on "Submit" located at the bottom of the page.

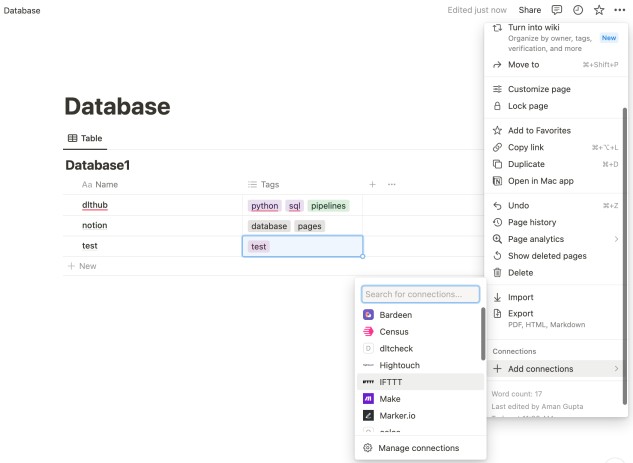

Add a connection to the database

Open the database that you want to load to the destination.

Click on the three dots located in the top right corner and choose "Add connections".

From the list of options, select the integration you previously created and click on "Confirm".

Note: The Notion UI, which is described here, might change. The full guide is available at this link.

Initialize the verified source

To get started with your data pipeline, follow these steps:

Enter the following command:

dlt init notion duckdbThis command will initialize the pipeline example with Notion as the source and duckdb as the destination.

If you'd like to use a different destination, simply replace

duckdbwith the name of your preferred destination.After running this command, a new directory will be created with the necessary files and configuration settings to get started.

For more information, read the guide on how to add a verified source.

Add credentials

In the

.dltfolder, there's a file calledsecrets.toml. It's where you store sensitive information securely, like access tokens. Keep this file safe. Here's its format for service account authentication:# Put your secret values and credentials here

# Note: Do not share this file and do not push it to GitHub!

[source.notion]

api_key = "set me up!" # Notion API token (e.g. secret_XXX...)Replace the value of

api_keywith the one that you copied above. This will ensure that your data-verified source can access your Notion resources securely.Next, follow the instructions in Destinations to add credentials for your chosen destination. This will ensure that your data is properly routed to its final destination.

For more information, read the General Usage: Credentials.

Run the pipeline

- Before running the pipeline, ensure that you have installed all the necessary dependencies by

running the command:

pip install -r requirements.txt - You're now ready to run the pipeline! To get started, run the following command:

python notion_pipeline.py - Once the pipeline has finished running, you can verify that everything loaded correctly by using

the following command:For example, the

dlt pipeline <pipeline_name> showpipeline_namefor the above pipeline example isnotion, you may also use any custom name instead.

For more information, read the guide on how to run a pipeline.

Sources and resources

dlt works on the principle of sources and

resources.

Source notion_databases

This function loads notion databases from notion into the destination.

@dlt.source

def notion_databases(

database_ids: Optional[List[Dict[str, str]]] = None,

api_key: str = dlt.secrets.value,

) -> Iterator[DltResource]:

...

database_ids: A list of dictionaries each containing a database id and a name.

api_key: The Notion API secret key.

If "database_ids" is None, the source fetches data from all integrated databases in your Notion account.

It is important to note that the data is loaded in “replace” mode where the existing data is completely replaced.

Customization

Create your own pipeline

If you wish to create your own pipelines, you can leverage source and resource methods from this verified source.

Configure the pipeline by specifying the pipeline name, destination, and dataset as follows:

pipeline = dlt.pipeline(

pipeline_name="notion", # Use a custom name if desired

destination="duckdb", # Choose the appropriate destination (e.g., duckdb, redshift, post)

dataset_name="notion_database" # Use a custom name if desired

)To read more about pipeline configuration, please refer to our documentation.

To load all the integrated databases:

load_data = notion_databases()

load_info = pipeline.run(load_data)

print(load_info)To load the custom databases:

selected_database_ids = [{"id": "0517dae9409845cba7d","use_name":"db_one"}, {"id": "d8ee2d159ac34cfc"}]

load_data = notion_databases(database_ids=selected_database_ids)

load_info = pipeline.run(load_data)

print(load_info)The Database ID can be retrieved from the URL. For example if the URL is:

https://www.notion.so/d8ee2d159ac34cfc85827ba5a0a8ae71?v=c714dec3742440cc91a8c38914f83b6bThe database ID in the given Notion URL is: "d8ee2d159ac34cfc85827ba5a0a8ae71".

The database ID in a Notion URL is the string right after notion.so/, before any question marks. It uniquely identifies a specific page or database.

The database name ("use_name") is optional; if skipped, the pipeline will fetch it from Notion automatically.

Additional Setup guides

- Load data from Notion to PostgreSQL in python with dlt

- Load data from Notion to AWS Athena in python with dlt

- Load data from Notion to Neon Serverless Postgres in python with dlt

- Load data from Notion to Databricks in python with dlt

- Load data from Notion to EDB BigAnimal in python with dlt

- Load data from Notion to Dremio in python with dlt

- Load data from Notion to CockroachDB in python with dlt

- Load data from Notion to Azure Cloud Storage in python with dlt

- Load data from Notion to Snowflake in python with dlt

- Load data from Notion to BigQuery in python with dlt